At Databricks, we all know that information is one among your most useful property. Our product and safety groups work collectively to ship an enterprise-grade Knowledge Intelligence Platform that lets you defend in opposition to safety dangers and meet your compliance obligations. Over the previous 12 months, we’re proud to have delivered new capabilities and assets resembling securing information entry with Azure Non-public Hyperlink for Databricks SQL Serverless, preserving information personal with Azure firewall help for Workspace storage, defending information in-use with Azure confidential computing, reaching FedRAMP Excessive Company ATO on AWS GovCloud, publishing the Databricks AI Safety Framework, and sharing particulars on our strategy to Accountable AI.

In line with the 2024 Verizon Knowledge Breach Investigations Report, the variety of information breaches has elevated by 30% since final 12 months. We imagine it’s essential so that you can perceive and appropriately make the most of our security measures and undertake really helpful safety greatest practices to mitigate information breach dangers successfully.

On this weblog, we’ll clarify how one can leverage a few of our platform’s high controls and not too long ago launched security measures to ascertain a strong defense-in-depth posture that protects your information and AI property. We may even present an outline of our safety greatest practices assets so that you can stand up and operating shortly.

Defend your information and AI workloads throughout the Databricks Knowledge Intelligence Platform

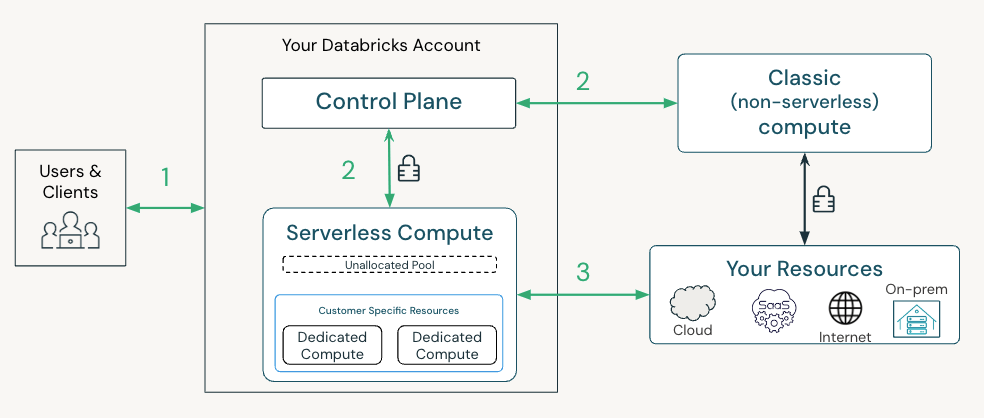

The Databricks Platform offers safety guardrails to defend in opposition to account takeover and information exfiltration dangers at every entry level. Within the under picture, we define a typical lakehouse structure on Databricks with 3 surfaces to safe:

- Your purchasers, customers and functions, connecting to Databricks

- Your workloads connecting to Databricks providers (APIs)

- Your information being accessed out of your Databricks workloads

Let’s now stroll by way of at a excessive degree among the high controls—both enabled by default or out there so that you can activate—and new safety capabilities for every connection level. Our full listing of suggestions based mostly on totally different menace fashions could be present in our safety greatest observe guides.

Connecting customers and functions into Databricks (1)

To guard in opposition to access-related dangers, you must use a number of components for each authentication and authorization of customers and functions into Databricks. Utilizing solely passwords is insufficient on account of their susceptibility to theft, phishing, and weak person administration. The truth is, as of July 10, 2024, Databricks-managed passwords reached the end-of-life and are not supported within the UI or by way of API authentication. Past this extra default safety, we advise you to implement the under controls:

- Authenticate by way of single-sign-on on the account degree for all person entry (AWS, SSO is mechanically enabled on Azure/GCP)

- Leverage multi-factor authentication provided by your IDP to confirm all customers and functions which are accessing Databricks (AWS, Azure, GCP)

- Allow unified login for all workspaces utilizing a single account-level SSO and configure SSO Emergency entry with MFA for streamlined and safe entry administration (AWS, Databricks integrates with built-in id suppliers on Azure/GCP)

- Use front-end personal hyperlink on workspaces to limit entry to trusted personal networks (AWS, Azure, GCP)

- Configure IP entry lists on workspaces and on your account to solely enable entry from trusted community areas, resembling your company community (AWS, Azure, GCP)

Connecting your workloads to Databricks providers (2)

To stop workload impersonation, Databricks authenticates workloads with a number of credentials through the lifecycle of the cluster. Our suggestions and out there controls rely in your deployment structure. At a excessive degree:

- For Traditional clusters that run in your community, we advocate configuring a back-end personal hyperlink between the compute airplane and the management airplane. Configuring the back-end personal hyperlink ensures that your cluster can solely be authenticated over that devoted and personal channel.

- For Serverless, Databricks mechanically offers a defense-in-depth safety posture on our platform utilizing a mixture of application-level credentials, mTLS shopper certificates and personal hyperlinks to mitigate in opposition to Workspace impersonation dangers.

Connecting from Databricks to your storage and information sources (3)

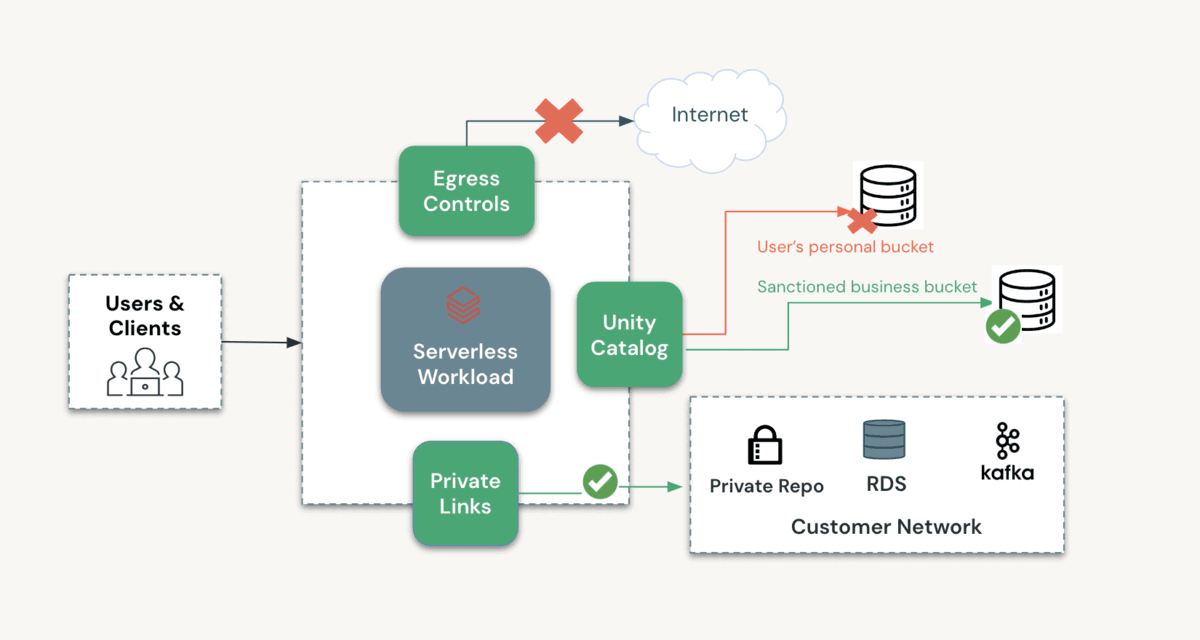

To make sure that information can solely be accessed by the fitting person and workload on the fitting Workspace, and that workloads can solely write to approved storage areas, we advocate leveraging the next options:

- Utilizing Unity Catalog to manipulate entry to information: Unity Catalog offers a number of layers of safety, together with fine-grained entry controls and time-bound down-scoped credentials which are solely accessible to trusted code by default.

- Leverage Mosaic AI Gateway: Now in Public Preview, Mosaic AI Gateway lets you monitor and management the utilization of each exterior fashions and fashions hosted on Databricks throughout your enterprise.

- Configuring entry from approved networks: You’ll be able to configure entry insurance policies utilizing S3 bucket insurance policies on AWS, Azure storage firewall and VPC Service Controls on GCP.

- With Traditional clusters, you may lock down entry to your community by way of the above-listed controls.

- With Serverless, you may lock down entry to the Serverless community (AWS, Azure) or to a devoted personal endpoint on Azure. On Azure, now you can allow the storage firewall on your Workspace storage (DBFS root) account.

- Assets exterior to Databricks, resembling exterior fashions or storage accounts, could be configured with devoted and personal connectivity. Here’s a deployment information for accessing Azure OpenAI, one among our most requested situations.

- Configuring egress controls to forestall entry to unauthorized storage areas: With Traditional clusters, you may configure egress controls in your community. With SQL Serverless, Databricks doesn’t enable web entry from untrusted code resembling Python UDFs. To find out how we’re enhancing egress controls as you undertake extra Serverless merchandise, please this way to hitch our previews.

The diagram under outlines how one can configure a non-public and safe surroundings for processing your information as you undertake Databricks Serverless merchandise. As described above, a number of layers of safety can shield all entry to and from this surroundings.

Outline, deploy and monitor your information and AI workloads with industry-leading safety greatest practices

Now that we’ve got outlined a set of key controls out there to you, you in all probability are questioning how one can shortly operationalize them for your online business. Our Databricks Safety group recommends taking a “outline, deploy, and monitor” strategy utilizing the assets they’ve developed from their expertise working with lots of of shoppers.

- Outline: You need to configure your Databricks surroundings by reviewing our greatest practices together with the dangers particular to your group. We have crafted complete greatest observe guides for Databricks deployments on all three main clouds. These paperwork supply a guidelines of safety practices, menace fashions, and patterns distilled from our enterprise engagements.

- Deploy: Terraform templates make deploying safe Databricks workspaces simple. You’ll be able to programmatically deploy workspaces and the required cloud infrastructure utilizing the official Databricks Terraform supplier. These unified Terraform templates are preconfigured with hardened safety settings just like these utilized by our most security-conscious clients. View our GitHub to get began on AWS, Azure, and GCP.

- Monitor: The Safety Evaluation Instrument (SAT) can be utilized to observe adherence to safety greatest practices in Databricks workspaces on an ongoing foundation. We not too long ago upgraded the SAT to streamline setup and improve checks, aligning them with the Databricks AI Safety Framework (DASF) for improved protection of AI safety dangers.

Keep forward in information and AI safety

The Databricks Knowledge Intelligence Platform offers an enterprise-grade defense-in-depth strategy for shielding information and AI property. For suggestions on mitigating safety dangers, please consult with our safety greatest practices guides on your chosen cloud(s). For a summarized guidelines of controls associated to unauthorized entry, please consult with this doc.

We constantly improve our platform based mostly in your suggestions, evolving {industry} requirements, and rising safety threats to higher meet your wants and keep forward of potential dangers. To remain knowledgeable, bookmark our Safety and Belief weblog, head over to our YouTube channel, and go to the Databricks Safety and Belief Heart.