Introduction

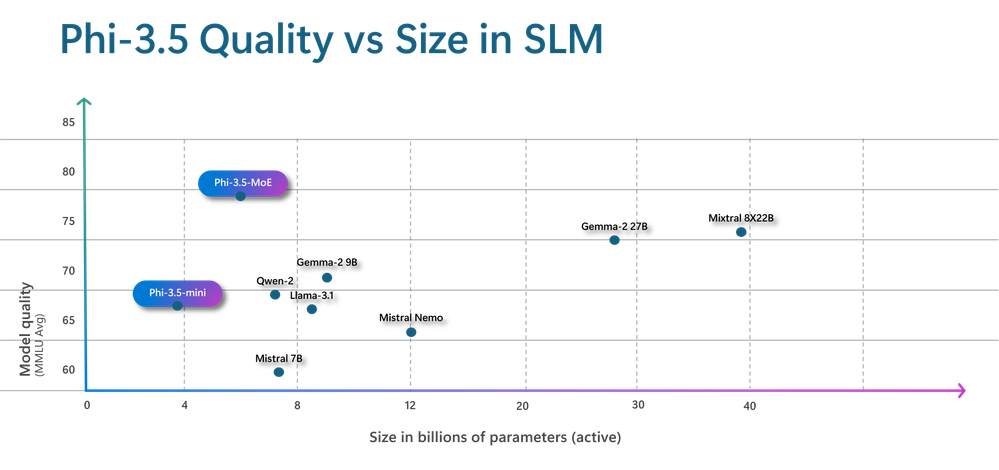

The latest mannequin assortment from Microsoft’s Small Language Fashions (SLMs) household known as Phi-3. They surpass fashions of comparable and higher sizes on quite a lot of benchmarks in language, reasoning, coding, and math. They’re made to be extraordinarily highly effective and economical. With Phi-3 fashions out there, Azure purchasers have entry to a wider vary of wonderful fashions, offering them with extra helpful choices for creating and growing generative AI purposes. For the reason that April 2024 launch, Azure has gathered a wealth of insightful enter from customers and neighborhood members relating to areas the place the Phi-3 SLMs might use enchancment.

They’re now happy to current Phi-3.5 SLMs – Phi-3.5-mini, Phi-3.5-vision, and Phi-3.5-MoE, a Combination-of-Consultants (MoE) mannequin, as the latest members of the Phi household. Phi-3.5-mini provides a 128K context size to enhance multilingual assist. Phi-3.5-vision enhances the comprehension and reasoning of multi-frame photographs, bettering efficiency on single-image benchmarks. Phi-3.5-MoE surpasses bigger fashions whereas sustaining the efficacy of Phi fashions with its 16 specialists, 6.6B lively parameters, low latency, multilingual assist, and powerful security options.

Phi-3.5-MoE: Combination-of-Consultants

Phi-3.5-MoE is the most important and newest mannequin among the many newest Phi 3.5 SLMs releases. It includes 16 specialists, every containing 3.8B parameters. With a complete mannequin measurement of 42B parameters, it prompts 6.6B parameters utilizing two specialists. This MoE mannequin performs higher than a dense mannequin of a comparable measurement relating to high quality and efficiency. Greater than 20 languages are supported. The MoE mannequin, like its Phi-3 counterparts, makes use of a mixture of proprietary and open-source artificial instruction and desire datasets in its strong security post-training approach. Utilizing artificial and human-labeled datasets, our post-training process combines Direct Desire Optimisation (DPO) with Supervised Nice-Tuning (SFT). These comprise a number of security classes and datasets emphasizing harmlessness and helpfulness. Furthermore, Phi-3.5-MoE can assist a context size of as much as 128K, which makes it able to dealing with quite a lot of long-context workloads.

Additionally learn: Microsoft Phi-3: From Language to Imaginative and prescient, this New AI Mannequin is Remodeling AI

Coaching Information of Phi 3.5 MoE

Coaching information of Phi 3.5 MoE contains all kinds of sources, totaling 4.9 trillion tokens (together with 10% multilingual), and is a mixture of:

- Publicly out there paperwork filtered rigorously for high quality chosen high-quality instructional information and code;

- Newly created artificial, “textbook-like” information to show math, coding, widespread sense reasoning, common data of the world (science, every day actions, concept of thoughts, and many others.);

- Excessive-quality chat format supervised information protecting numerous subjects to mirror human preferences, comparable to instruct-following, truthfulness, honesty, and helpfulness.

Azure focuses on the standard of knowledge that might doubtlessly enhance the mannequin’s reasoning capacity, and it filters the publicly out there paperwork to include the right degree of information. For instance, the results of a sport within the Premier League on a selected day could be good coaching information for frontier fashions, nevertheless it wanted to take away such info to go away extra mannequin capability for reasoning for small-size fashions. Extra particulars about information might be discovered within the Phi-3 Technical Report.

Phi 3.5 MoE coaching takes 23 days and makes use of 4.9T tokens of coaching information. The supported languages are Arabic, Chinese language, Czech, Danish, Dutch, English, Finnish, French, German, Hebrew, Hungarian, Italian, Japanese, Korean, Norwegian, Polish, Portuguese, Russian, Spanish, Swedish, Thai, Turkish, and Ukrainian.

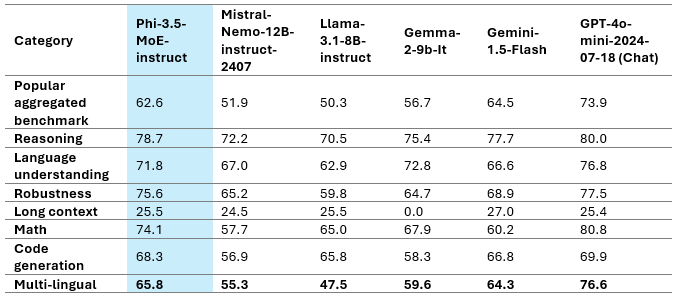

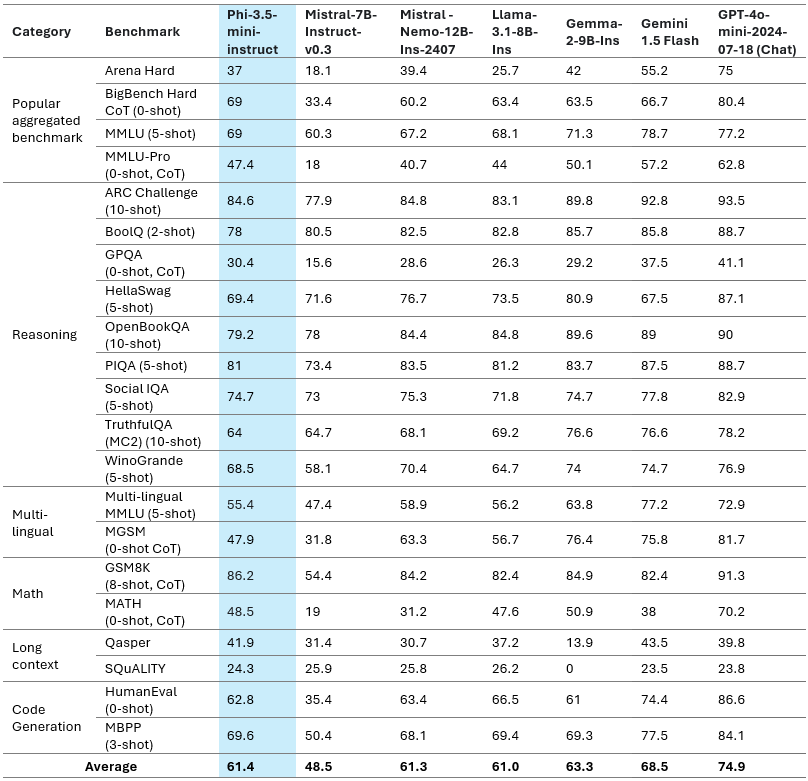

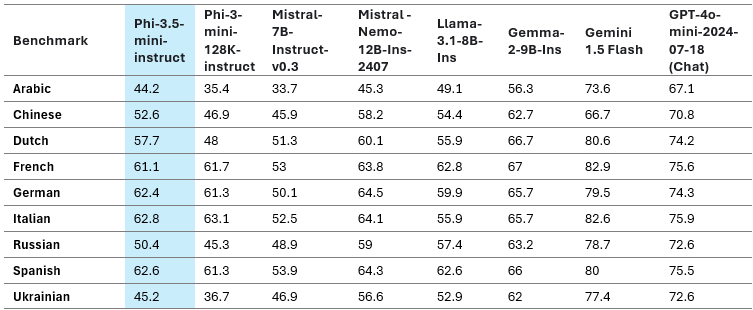

The above desk represents Phi-3.5-MoE Mannequin High quality on numerous capabilities. We are able to see that Phi 3.5 MoE is performing higher than some bigger fashions in numerous classes. Phi-3.5-MoE with solely 6.6B lively parameters achieves an analogous degree of language understanding and math as a lot bigger fashions. Furthermore, the mannequin outperforms larger fashions in reasoning functionality. The mannequin gives good capability for finetuning for numerous duties.

The multilingual MMLU, MEGA, and multilingual MMLU-pro datasets are used within the above desk to display the Phi-3.5-MoE’s multilingual capability. We discovered that the mannequin outperforms competing fashions with considerably bigger lively parameters on multilingual duties, even with solely 6.6B lively parameters.

Phi-3.5-mini

The Phi-3.5-mini mannequin underwent further pre-training utilizing multilingual artificial and high-quality filtered information. Subsequent post-training procedures, comparable to Direct Desire Optimization (DPO), Proximal Coverage Optimization (PPO), and Supervised Nice-Tuning (SFT), have been then carried out. These procedures used artificial, translated, and human-labeled datasets.

Coaching Information of Phi 3.5 Mini

Coaching information of Phi 3.5 Mini contains all kinds of sources, totaling 3.4 trillion tokens, and is a mixture of:

- Publicly out there paperwork filtered rigorously for high quality chosen high-quality instructional information and code;

- Newly created artificial, “textbook-like” information to show math, coding, widespread sense reasoning, common data of the world (science, every day actions, concept of thoughts, and many others.);

- Excessive-quality chat format supervised information protecting numerous subjects to mirror human preferences, comparable to instruct-following, truthfulness, honesty, and helpfulness.

Mannequin High quality

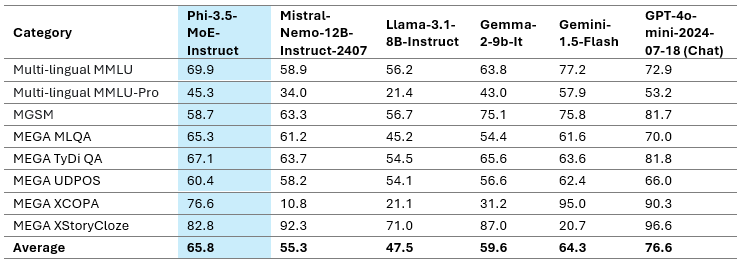

The above desk provides a fast overview of the mannequin high quality on vital benchmarks. This efficient mannequin meets, if not outperforms, different fashions with higher sizes regardless of having a compact measurement of solely 3.8B parameters.

Additionally learn: Microsoft Phi 3 Mini: The Tiny Mannequin That Runs on Your Telephone

Multi-lingual Functionality

Our latest replace to the three.8B mannequin is Phi-3.5-mini. The mannequin considerably improved multilingualism, multiturn dialog high quality, and reasoning capability by incorporating further steady pre-training and post-training information.

Multilingual assist is a serious advance over Phi-3-mini with Phi-3.5-mini. With 25–50% efficiency enhancements, Arabic, Dutch, Finnish, Polish, Thai, and Ukrainian languages benefited probably the most from the brand new Phi 3.5 mini. Seen in a broader context, Phi-3.5-mini demonstrates one of the best efficiency of any sub-8B mannequin in a number of languages, together with English. It must be famous that whereas the mannequin has been optimized for greater useful resource languages and employs 32K vocabulary, it’s not suggested to make use of it for decrease useful resource languages with out further fine-tuning.

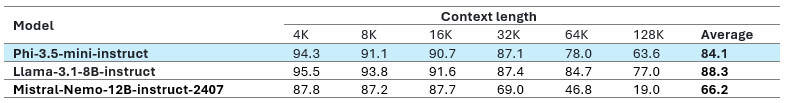

Lengthy Context

With a 128K context size assist, Phi-3.5-mini is a wonderful selection for purposes like info retrieval, lengthy document-based high quality assurance, and summarising prolonged paperwork or assembly transcripts. In comparison with the Gemma-2 household, which might solely deal with an 8K context size, Phi-3.5 performs higher. Moreover, Phi-3.5-mini has stiff competitors from significantly bigger open-weight fashions like Mistral-7B-instruct-v0.3, Llama-3.1-8B-instruct, and Mistral-Nemo-12B-instruct-2407. Phi-3.5-mini-instruct is the one mannequin on this class, with simply 3.8B parameters, 128K context size, and multi-lingual assist. It’s vital to notice that Azure selected to assist extra languages whereas holding English efficiency constant for numerous duties. Because of the mannequin’s restricted functionality, English data could also be superior to different languages. Azure suggests using the mannequin within the RAG setup for duties requiring a excessive degree of multilingual understanding.

Additionally learn: Phi 3 – Small But Highly effective Fashions from Microsoft

Phi-3.5-vision with Multi-frame Enter

Coaching Information of three.5 Imaginative and prescient

Azure’s coaching information contains all kinds of sources and is a mixture of:

- Publicly out there paperwork filtered rigorously for high quality chosen high-quality instructional information and code;

- Chosen high-quality image-text interleave information;

- Newly created artificial, “textbook-like” information for the aim of educating math, coding, widespread sense reasoning, common data of the world (science, every day actions, concept of thoughts, and many others.), newly created picture information, e.g., chart/desk/diagram/slides, newly created multi-image and video information, e.g., brief video clips/pair of two comparable photographs;

- Excessive-quality chat format supervised information protecting numerous subjects to mirror human preferences, comparable to instruct-following, truthfulness, honesty, and helpfulness.

The info assortment course of concerned sourcing info from publicly out there paperwork and meticulously filtering out undesirable paperwork and pictures. To safeguard privateness, we rigorously filtered numerous picture and textual content information sources to take away or scrub any doubtlessly private information from the coaching information.

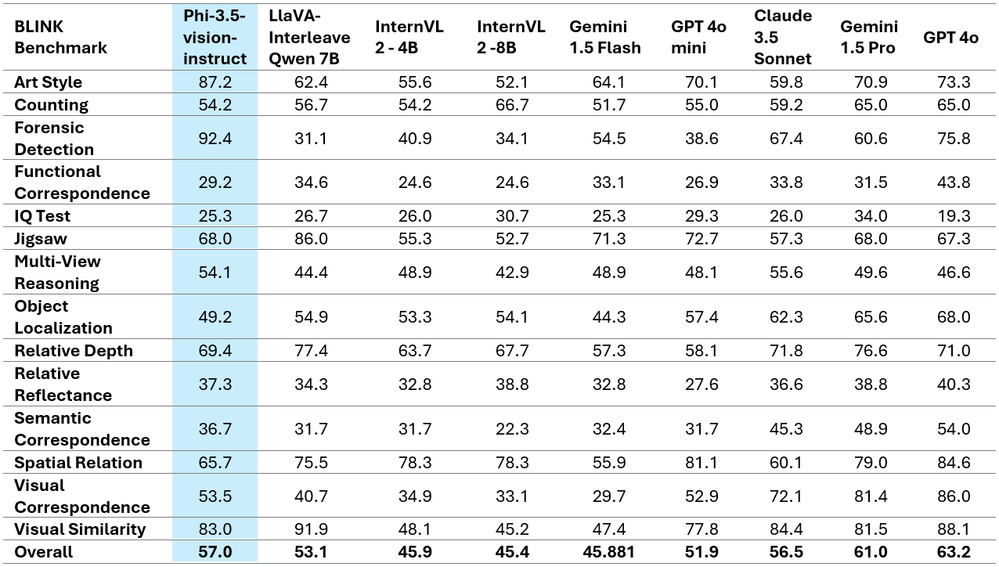

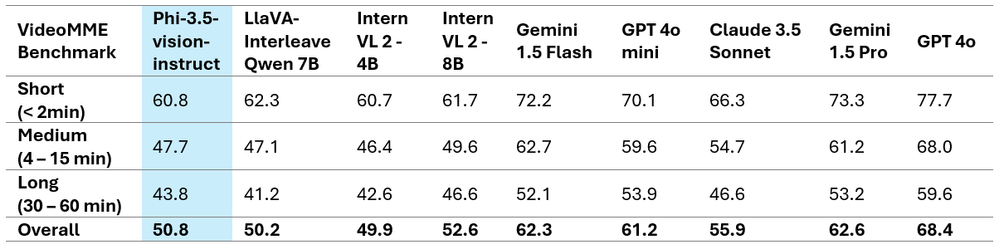

Phi-3.5-vision delivers state-of-the-art multi-frame picture understanding and reasoning capabilities because of important person suggestions. With a variety of purposes throughout a number of contexts, this breakthrough allows exact image comparability, multi-image summarization/storytelling, and video summarisation.

Surprisingly, Phi-3.5-vision has proven notable positive factors in efficiency throughout a number of single-image benchmarks. For example, it elevated the MMBench efficiency from 80.5 to 81.9 and the MMMU efficiency from 40.4 to 43.0. Moreover, the usual for doc comprehension, TextVQA, elevated from 70.9 to 72.0.

The tables above showcase the improved efficiency metrics and current the excellent comparative findings on two well-known multi-image/video benchmarks. It is very important notice that Phi-3.5-Imaginative and prescient doesn’t assist multilingual use circumstances. With out further fine-tuning, it is suggested in opposition to utilizing it for multilingual situations.

Attempting out Phi 3.5 Mini

Utilizing Hugging Face

We are going to use kaggle pocket book to implement Phi 3.5 Mini because it accommodates the Phi 3.5 mini mannequin higher than Google Colab. Notice: Be certain to allow the accelerator to GPU T4x2.

1st Step: Importing crucial libraries

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

torch.random.manual_seed(0)2nd Step: Loading the Mannequin and Tokenizer

mannequin = AutoModelForCausalLM.from_pretrained(

"microsoft/Phi-3.5-mini-instruct",

device_map="cuda",

torch_dtype="auto",

trust_remote_code=True,

)

tokenizer = AutoTokenizer.from_pretrained("microsoft/Phi-3.5-mini-instruct")third Step: Making ready messages

messages = [

{"role": "system", "content": "You are a helpful AI assistant."},

{"role": "user", "content": "Tell me about microsoft"},

]“function”: “system”: Units the conduct of the AI mannequin (on this case, as a “useful AI assistant”

“function”: “person”: Represents the person’s enter.

Step 4: Creating the Pipeline

pipe = pipeline(

"text-generation",

mannequin=mannequin,

tokenizer=tokenizer,

)This creates a pipeline for textual content technology utilizing the desired mannequin and tokenizer. The pipeline abstracts the complexities of tokenization, mannequin execution, and decoding, offering a simple interface for producing textual content.

Step 5: Setting Technology Arguments

generation_args = {

"max_new_tokens": 500,

"return_full_text": False,

"temperature": 0.0,

"do_sample": False,

}These arguments management how the mannequin generates textual content.

- max_new_tokens=500: The utmost variety of tokens to generate.

- return_full_text=False: Solely the generated textual content (not the enter) might be returned.

- temperature=0.0: Controls randomness within the output. A worth of 0.0 makes the mannequin deterministic, producing the probably output.

- do_sample=False: Disables sampling, making the mannequin all the time select probably the most possible subsequent token.

Step 6: Producing Textual content

output = pipe(messages, **generation_args)

print(output[0]['generated_text'])

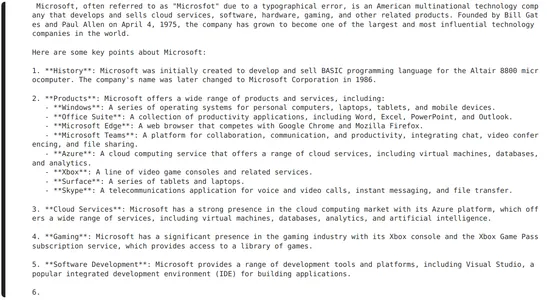

Utilizing Azure AI Studio

We are able to attempt Phi 3.5 Mini Instruct in Azure AI Studio utilizing their Interface. There’s a part referred to as “Attempt it out” within the Azure AI Studio. Under is a snapshot of utilizing Phi 3.5 Mini.

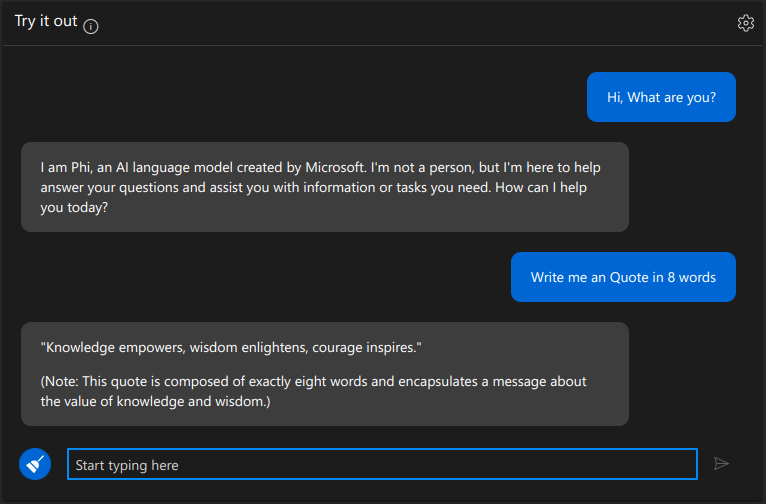

Utilizing HuggingChat from Hugging Face

Right here is the HuggingChat Hyperlink.

Attempting Phi 3.5 Imaginative and prescient

Utilizing Areas from Hugging Face

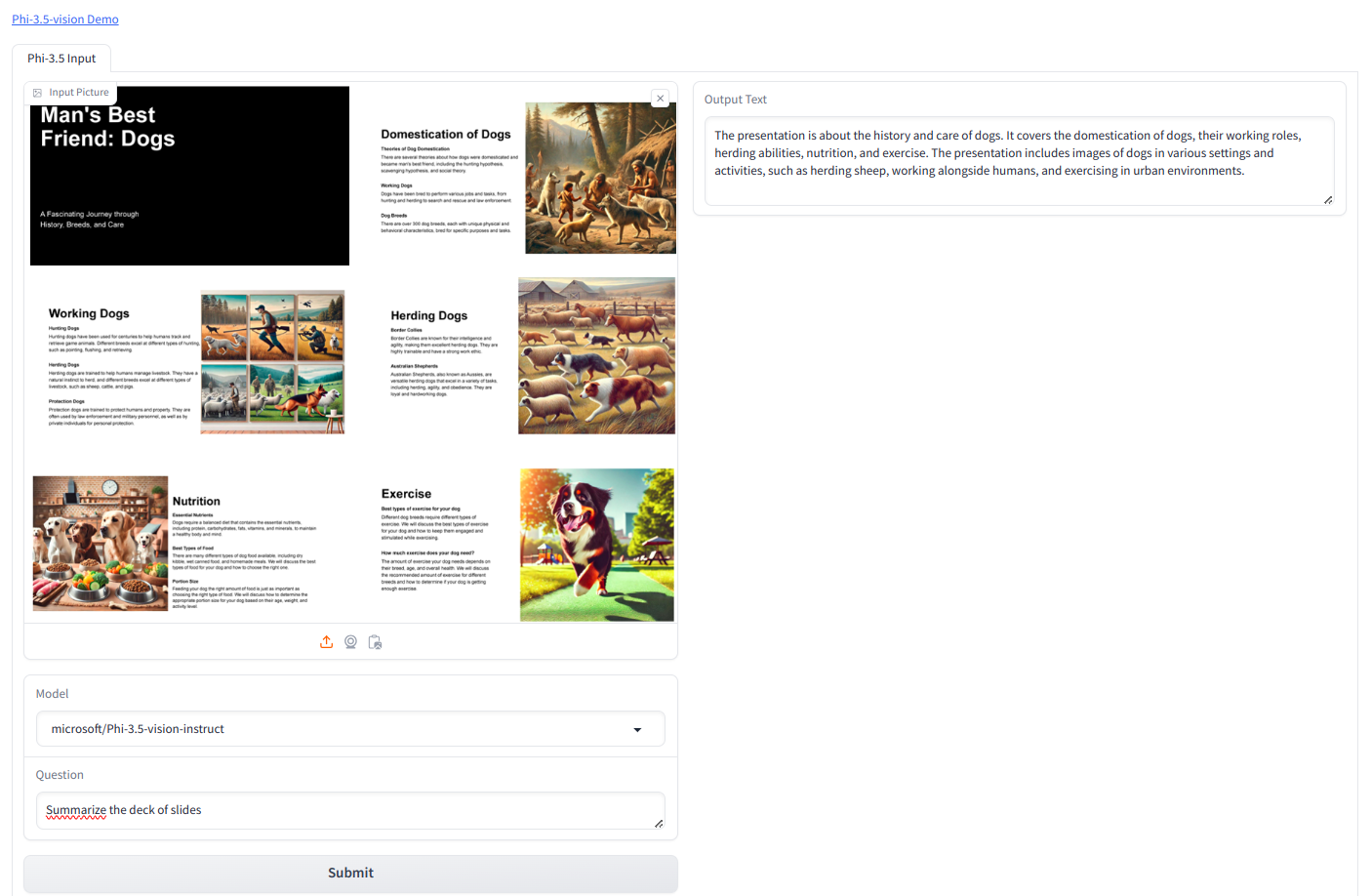

Since Phi 3.5 Imaginative and prescient is a GPU-intensive mannequin, we can’t use the mannequin with a free tier of colab and kaggle. Therefore, I’ve used hugging face areas to attempt Phi 3.5 Imaginative and prescient.

We might be utilizing the beneath picture.

Immediate we used is “Summarize the deck of slides”

Output

The presentation is in regards to the historical past and care of canines. It covers the domestication of canines, their working roles, herding talents, diet, and train. The presentation contains photographs of canines in numerous settings and actions, comparable to herding sheep, working alongside people, and exercising in city environments.

Conclusion

The Phi-3.5-mini is a novel LLM with 3.8B parameters, 128K context size, and multi-lingual assist. It balances broad language assist with English data density. It’s finest utilized in a Retrieval-Augmented Technology setup for multilingual duties. The Phi-3.5-MoE has 16 small specialists, delivers high-quality efficiency, reduces latency, and helps 128k context size and a number of languages. It may be custom-made for numerous purposes and has 6.6B lively parameters. The Phi-3.5-vision enhances single-image benchmark efficiency. The Phi-3.5 SLMs household provides cost-effective, high-capability choices for the open-source neighborhood and Azure prospects.

In case you are searching for a Generative AI course on-line, then discover right this moment – GenAI Pinnacle Program

Regularly Requested Questions

Ans. Phi-3.5 fashions are the most recent in Microsoft’s Small Language Fashions (SLMs) household, designed for top efficiency and effectivity in language, reasoning, coding, and math duties.

Ans. Phi-3.5-MoE is a Combination-of-Consultants mannequin with 16 specialists, supporting 20+ languages, 128K context size, and designed to outperform bigger fashions in reasoning and multilingual duties.

Ans. Phi-3.5-mini is a compact mannequin with 3.8B parameters, 128K context size, and improved multilingual assist. It excels in English and several other different languages.

Ans. You’ll be able to attempt Phi-3.5 SLMs on platforms like Hugging Face and Azure AI Studio, the place they’re out there for numerous AI purposes.