Introduction

Apache Iceberg has just lately grown in reputation as a result of it provides information warehouse-like capabilities to your information lake making it simpler to research all of your information—structured and unstructured. It affords a number of advantages equivalent to schema evolution, hidden partitioning, time journey, and extra that enhance the productiveness of knowledge engineers and information analysts. Nevertheless, it is advisable to recurrently preserve Iceberg tables to maintain them in a wholesome state in order that learn queries can carry out sooner. This weblog discusses just a few issues that you simply may encounter with Iceberg tables and affords methods on tips on how to optimize them in every of these eventualities. You possibly can make the most of a mix of the methods offered and adapt them to your explicit use circumstances.

Downside with too many snapshots

Everytime a write operation happens on an Iceberg desk, a brand new snapshot is created. Over a time period this could trigger the desk’s metadata.json file to get bloated and the variety of previous and probably pointless information/delete information current within the information retailer to develop, rising storage prices. A bloated metadata.json file may improve each learn/write occasions as a result of a big metadata file must be learn/written each time. Recurrently expiring snapshots is beneficial to delete information information which can be now not wanted, and to maintain the dimensions of desk metadata small. Expiring snapshots is a comparatively low cost operation and makes use of metadata to find out newly unreachable information.

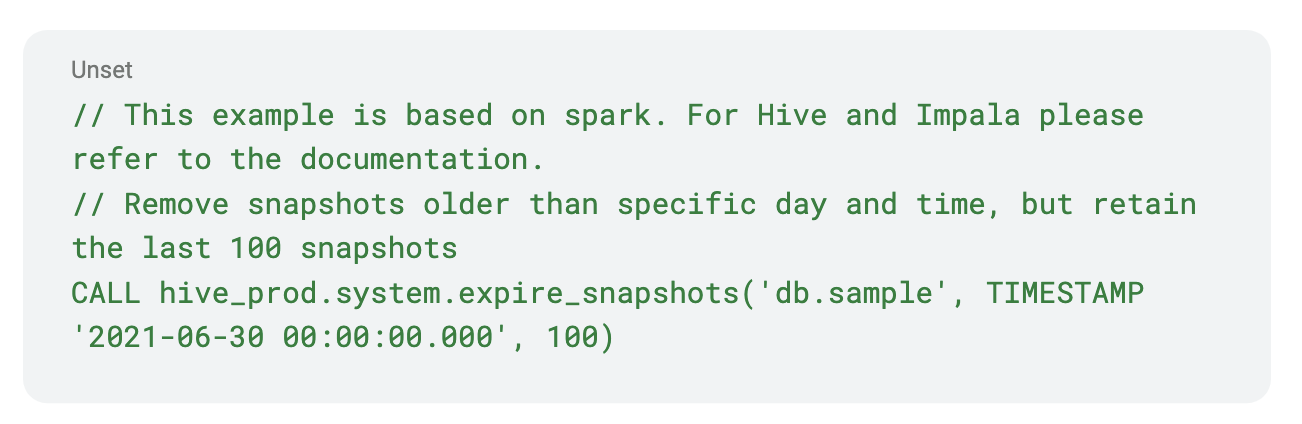

Answer: expire snapshots

We are able to expire previous snapshots utilizing expire_snapshots

Downside with suboptimal manifests

Over time the snapshots may reference many manifest information. This might trigger a slowdown in question planning and improve the runtime of metadata queries. Moreover, when first created the manifests might not lend themselves properly to partition pruning, which will increase the general runtime of the question. Alternatively, if the manifests are properly organized into discrete bounds of partitions, then partition pruning can prune away whole subtrees of knowledge information.

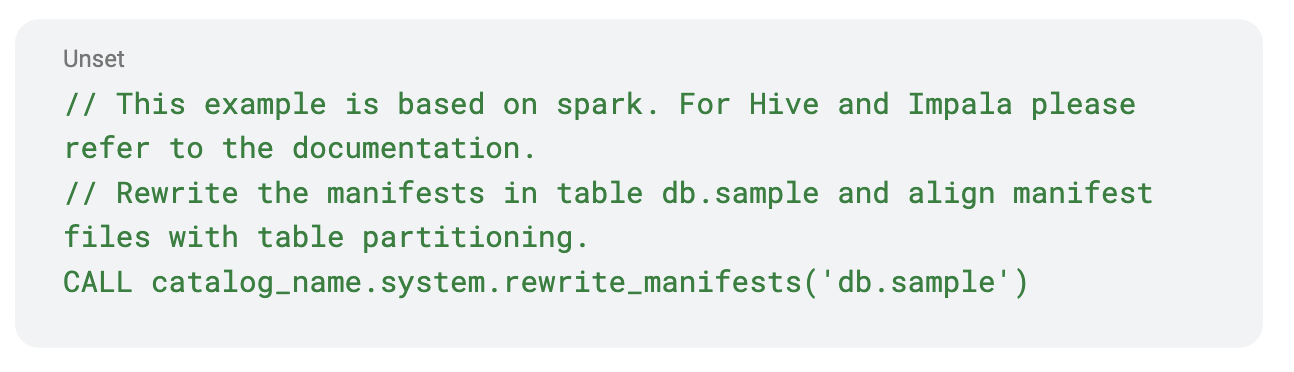

Answer: rewrite manifests

We are able to clear up the too many manifest information downside with rewrite_manifests and probably get a well-balanced hierarchical tree of knowledge information.

Downside with delete information

Background

merge-on-read vs copy-on-write

Since Iceberg V2, every time current information must be up to date (by way of delete, replace, or merge statements), there are two choices out there: copy-on-write and merge-on-read. With the copy-on-write possibility, the corresponding information information of a delete, replace, or merge operation shall be learn and fully new information information shall be written with the mandatory write modifications. Iceberg doesn’t delete the previous information information. So if you wish to question the desk earlier than the modifications have been utilized you need to use the time journey function of Iceberg. In a later weblog, we’ll go into particulars about tips on how to make the most of the time journey function. In the event you determined that the previous information information should not wanted any extra then you possibly can eliminate them by expiring the older snapshot as mentioned above.

With the merge-on-read possibility, as an alternative of rewriting the complete information information in the course of the write time, merely a delete file is written. This may be an equality delete file or a positional delete file. As of this writing, Spark doesn’t write equality deletes, however it’s able to studying them. The benefit of utilizing this feature is that your writes may be a lot faster as you aren’t rewriting a whole information file. Suppose you need to delete a particular consumer’s information in a desk due to GDPR necessities, Iceberg will merely write a delete file specifying the areas of the consumer information within the corresponding information information the place the consumer’s information exist. So every time you’re studying the tables, Iceberg will dynamically apply these deletes and current a logical desk the place the consumer’s information is deleted although the corresponding data are nonetheless current within the bodily information information.

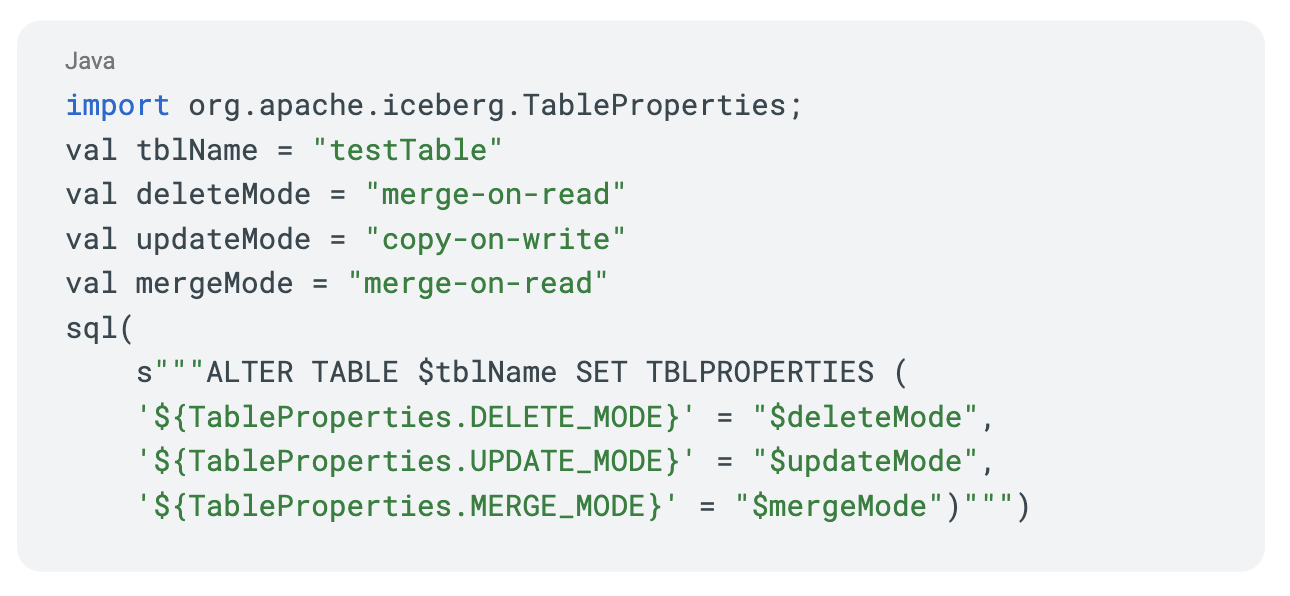

We allow the merge-on-read possibility for our prospects by default. You possibly can allow or disable them by setting the next properties primarily based in your necessities. See Write properties.

Serializable vs snapshot isolation

The default isolation assure offered for the delete, replace, and merge operations is serializable isolation. You would additionally change the isolation stage to snapshot isolation. Each serializable and snapshot isolation ensures present a read-consistent view of your information. Serializable Isolation is a stronger assure. As an example, you will have an worker desk that maintains worker salaries. Now, you need to delete all data equivalent to staff with wage better than $100,000. Let’s say this wage desk has 5 information information and three of these have data of staff with wage better than $100,000. Whenever you provoke the delete operation, the three information containing worker salaries better than $100,000 are chosen, then in case your “delete_mode” is merge-on-read a delete file is written that factors to the positions to delete in these three information information. In case your “delete_mode” is copy-on-write, then all three information information are merely rewritten.

No matter the delete_mode, whereas the delete operation is going on, assume a brand new information file is written by one other consumer with a wage better than $100,000. If the isolation assure you selected is snapshot, then the delete operation will succeed and solely the wage data equivalent to the unique three information information are eliminated out of your desk. The data within the newly written information file whereas your delete operation was in progress, will stay intact. Alternatively, in case your isolation assure was serializable, then your delete operation will fail and you’ll have to retry the delete from scratch. Relying in your use case you may need to scale back your isolation stage to “snapshot.”

The issue

The presence of too many delete information will ultimately scale back the learn efficiency, as a result of in Iceberg V2 spec, everytime a knowledge file is learn, all of the corresponding delete information additionally have to be learn (the Iceberg neighborhood is at present contemplating introducing an idea referred to as “delete vector” sooner or later and that may work otherwise from the present spec). This might be very pricey. The place delete information may comprise dangling deletes, as in it may need references to information which can be now not current in any of the present snapshots.

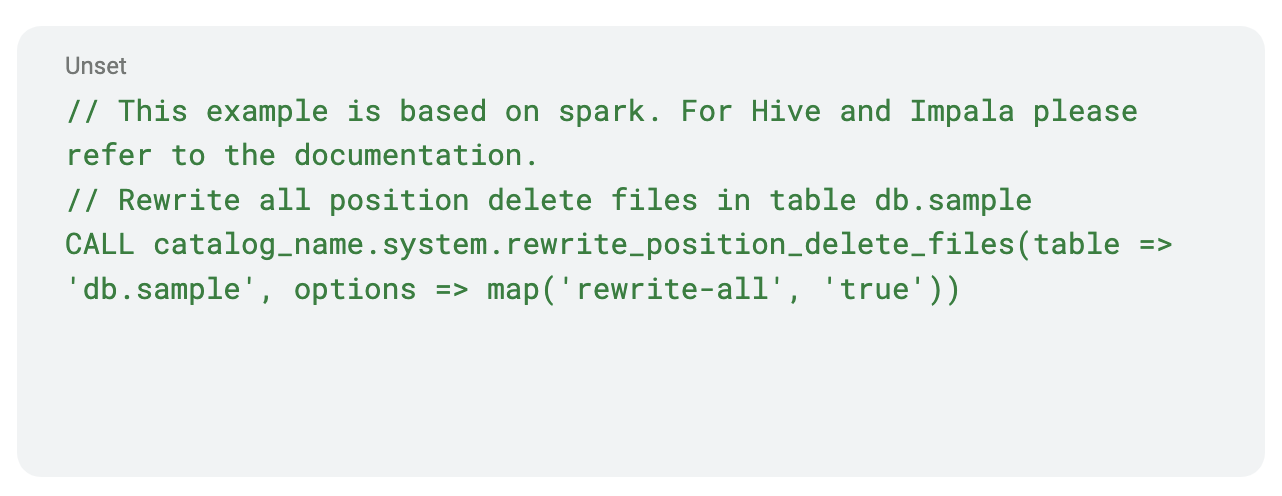

Answer: rewrite place deletes

For place delete information, compacting the place delete information mitigates the issue a bit bit by decreasing the variety of delete information that have to be learn and providing sooner efficiency by higher compressing the delete information. As well as the process additionally deletes the dangling deletes.

Rewrite place delete information

Iceberg supplies a rewrite place delete information process in Spark SQL.

However the presence of delete information nonetheless pose a efficiency downside. Additionally, regulatory necessities may drive you to ultimately bodily delete the info somewhat than do a logical deletion. This may be addressed by doing a significant compaction and eradicating the delete information completely, which is addressed later within the weblog.

Downside with small information

We usually need to decrease the variety of information we’re touching throughout a learn. Opening information is expensive. File codecs like Parquet work higher if the underlying file dimension is giant. Studying extra of the identical file is cheaper than opening a brand new file. In Parquet, usually you need your information to be round 512 MB and row-group sizes to be round 128 MB. Throughout the write section these are managed by “write.target-file-size-bytes” and “write.parquet.row-group-size-bytes” respectively. You may need to depart the Iceberg defaults alone except you understand what you’re doing.

In Spark for instance, the dimensions of a Spark process in reminiscence will have to be a lot increased to succeed in these defaults, as a result of when information is written to disk, it will likely be compressed in Parquet/ORC. So getting your information to be of the fascinating dimension is just not simple except your Spark process dimension is sufficiently big.

One other downside arises with partitions. Until aligned correctly, a Spark process may contact a number of partitions. Let’s say you will have 100 Spark duties and every of them wants to jot down to 100 partitions, collectively they are going to write 10,000 small information. Let’s name this downside partition amplification.

Answer: use distribution-mode in write

The amplification downside might be addressed at write time by setting the suitable write distribution mode in write properties. Insert distribution is managed by “write.distribution-mode” and is defaulted to none by default. Delete distribution is managed by “write.delete.distribution-mode” and is defaulted to hash, Replace distribution is managed by “write.replace.distribution-mode” and is defaulted to hash and merge distribution is managed by “write.merge.distribution-mode” and is defaulted to none.

The three write distribution modes which can be out there in Iceberg as of this writing are none, hash, and vary. When your mode is none, no information shuffle happens. It’s best to use this mode solely if you don’t care concerning the partition amplification downside or when you understand that every process in your job solely writes to a particular partition.

When your mode is about to hash, your information is shuffled by utilizing the partition key to generate the hashcode so that every resultant process will solely write to a particular partition. When your distribution mode is vary, your information is distributed such that your information is ordered by the partition key or kind key if the desk has a SortOrder.

Utilizing the hash or vary can get tough as you at the moment are repartitioning the info primarily based on the variety of partitions your desk may need. This may trigger your Spark duties after the shuffle to be both too small or too giant. This downside may be mitigated by enabling adaptive question execution in spark by setting “spark.sql.adaptive.enabled=true” (that is enabled by default from Spark 3.2). A number of configs are made out there in Spark to regulate the conduct of adaptive question execution. Leaving the defaults as is except you understand precisely what you’re doing might be the most suitable choice.

Despite the fact that the partition amplification downside might be mitigated by setting right write distribution mode acceptable in your job, the resultant information may nonetheless be small simply because the Spark duties writing them might be small. Your job can’t write extra information than it has.

Answer: rewrite information information

To deal with the small information downside and delete information downside, Iceberg supplies a function to rewrite information information. This function is at present out there solely with Spark. The remainder of the weblog will go into this in additional element. This function can be utilized to compact and even broaden your information information, incorporate deletes from delete information equivalent to the info information which can be being rewritten, present higher information ordering in order that extra information might be filtered straight at learn time, and extra. It is without doubt one of the strongest instruments in your toolbox that Iceberg supplies.

RewriteDataFiles

Iceberg supplies a rewrite information information process in Spark SQL.

See RewriteDatafiles JavaDoc to see all of the supported choices.

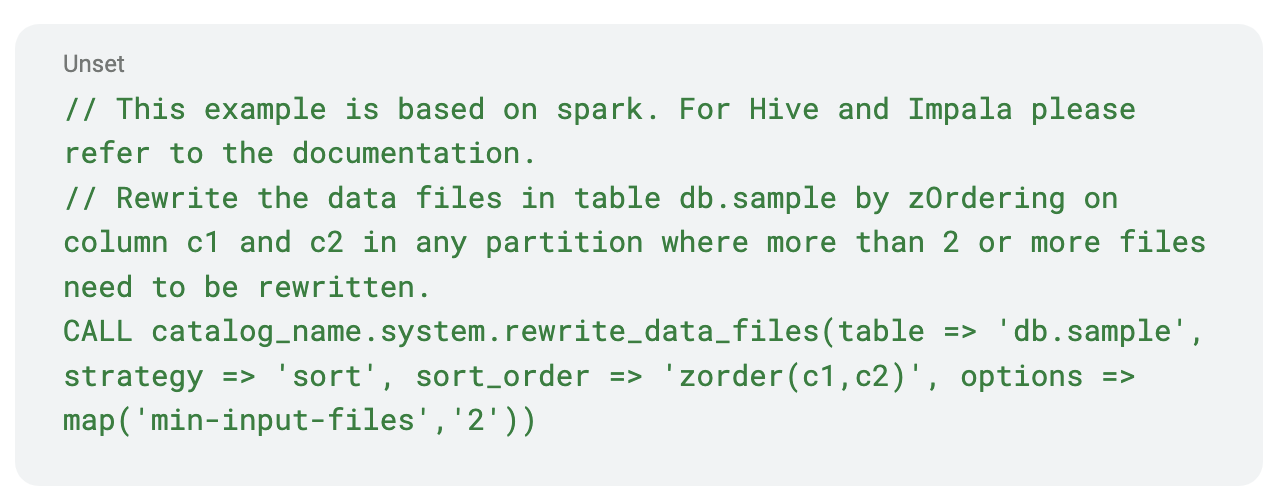

Now let’s talk about what the technique possibility means as a result of it is very important perceive to get extra out of the rewrite information information process. There are three technique choices out there. They’re Bin Pack, Type, and Z Order. Notice that when utilizing the Spark process the Z Order technique is invoked by merely setting the sort_order to “zorder(columns…).”

Technique possibility

- Bin Pack

- It’s the least expensive and quickest.

- It combines information which can be too small and combines them utilizing the bin packing method to scale back the variety of output information.

- No information ordering is modified.

- No information is shuffled.

- Type

- Far more costly than Bin Pack.

- Offers complete hierarchical ordering.

- Learn queries solely profit if the columns used within the question are ordered.

- Requires information to be shuffled utilizing vary partitioning earlier than writing.

- Z Order

- Costliest of the three choices.

- The columns which can be getting used ought to have some form of intrinsic clusterability and nonetheless must have a adequate quantity of knowledge in every partition as a result of it solely helps in eliminating information from a learn scan, not from eliminating row teams. In the event that they do, then queries can prune numerous information throughout learn time.

- It solely is sensible if a couple of column is used within the Z order. If just one column is required then common kind is the higher possibility.

- See https://weblog.cloudera.com/speeding-up-queries-with-z-order/ to study extra about Z ordering.

Commit conflicts

Iceberg makes use of optimistic concurrency management when committing new snapshots. So, once we use rewrite information information to replace our information a brand new snapshot is created. However earlier than that snapshot is dedicated, a verify is completed to see if there are any conflicts. If a battle happens all of the work performed may probably be discarded. It is very important plan upkeep operations to attenuate potential conflicts. Allow us to talk about among the sources of conflicts.

- If solely inserts occurred between the beginning of rewrite and the commit try, then there aren’t any conflicts. It is because inserts lead to new information information and the brand new information information may be added to the snapshot for the rewrite and the commit reattempted.

- Each delete file is related to a number of information information. If a brand new delete file corresponding to an information file that’s being rewritten is added in future snapshot (B), then a battle happens as a result of the delete file is referencing a knowledge file that’s already being rewritten.

Battle mitigation

- In the event you can, strive pausing jobs that may write to your tables in the course of the upkeep operations. Or no less than deletes shouldn’t be written to information which can be being rewritten.

- Partition your desk in such a approach that each one new writes and deletes are written to a brand new partition. As an example, in case your incoming information is partitioned by date, all of your new information can go right into a partition by date. You possibly can run rewrite operations on partitions with older dates.

- Reap the benefits of the filter possibility within the rewrite information information spark motion to finest choose the information to be rewritten primarily based in your use case in order that no delete conflicts happen.

- Enabling partial progress will assist save your work by committing teams of information previous to the complete rewrite finishing. Even when one of many file teams fails, different file teams may succeed.

Conclusion

Iceberg supplies a number of options {that a} trendy information lake wants. With a bit care, planning and understanding a little bit of Iceberg’s structure one can take most benefit of all of the superior options it supplies.

To strive a few of these Iceberg options your self you possibly can sign up for one in every of our subsequent dwell hands-on labs.

You may as well watch the webinar to study extra about Apache Iceberg and see the demo to study the newest capabilities.